Physical AI: From Pixels to Pavements

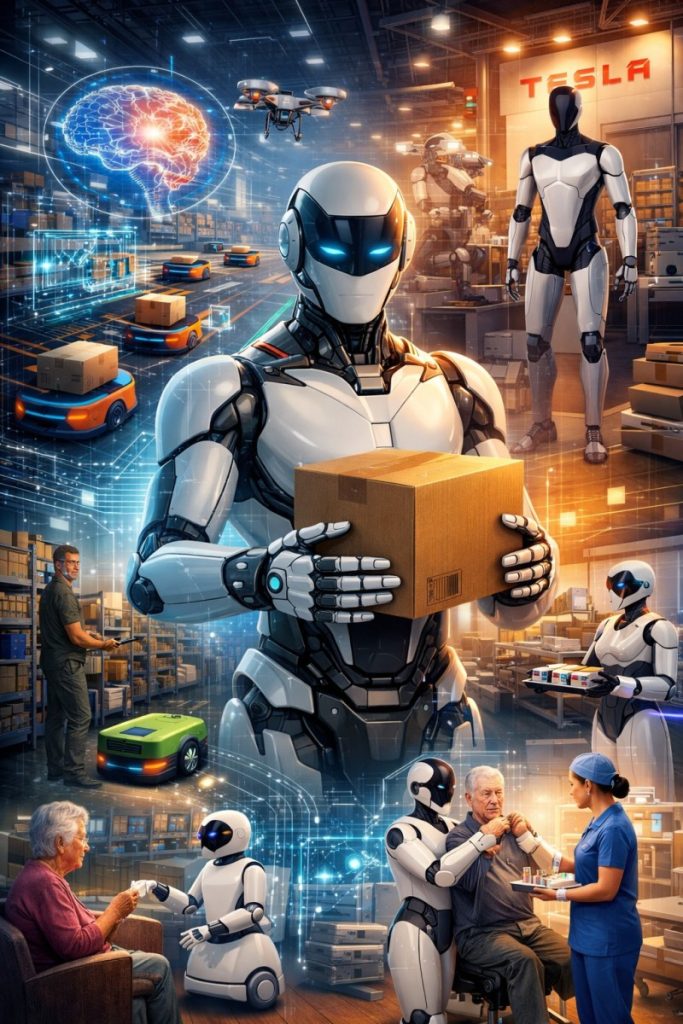

Physical AI has transformed artificial intelligence from a purely digital phenomenon into systems capable of perceiving, moving, and interacting with the real world. For much of its history, AI could analyze data, generate language, and optimize decisions, yet it remained disconnected from physical environments. Traditional AI could simulate intelligence, but it could not feel resistance, judge balance, or adapt to the unpredictability of real-world conditions.

Consequently, robots are no longer confined to controlled environments or narrowly defined tasks. They are beginning to function as autonomous agents in the physical world.

What Is Embodied AI?

Embodied AI refers to artificial intelligence systems that are tightly integrated with physical form and sensory perception. Rather than executing fixed instructions, these systems continuously interpret input from their environment and adjust their actions in real time.

Traditional industrial robots operate by following precise, pre-programmed coordinates, repeating the same movement regardless of external changes. By contrast, Embodied AI systems rely on vision, LiDAR, force sensors, and tactile feedback to build a dynamic understanding of their surroundings.

This sensory awareness enables a fundamentally different set of capabilities:

-

Environmental Adaptability: Robots detect and compensate for misaligned, damaged, or inconsistent objects automatically.

-

Learning Through Demonstration: Using imitation learning and digital twin simulations, robots acquire skills by observing humans or practicing in virtual environments.

-

Tactile and Force Intelligence: Advanced sensors allow precise grip and pressure control, enabling robots to handle fragile, flexible, or irregular objects safely.

Together, these capabilities transform robots from mechanical tools into adaptive participants in physical workflows.

Amazon: A Large-Scale Testbed for Physical AI

Amazon represents the largest real-world deployment of Physical AI to date. By 2026, the company operates a global fleet of over one million robots, turning its logistics network into a living laboratory for embodied intelligence.

Initially, deployments focused on simple, repetitive tasks. However, Amazon now uses interconnected systems— DeepFleet, Vulcan, Proteus, and Sequoia—to create a unified ecosystem of adaptive, learning-enabled robots.

DeepFleet: Coordinating Intelligence at Scale

DeepFleet is a generative AI foundation model that manages thousands of robots simultaneously. Within fulfillment centers, robots navigate shared spaces, crossing paths constantly. DeepFleet predicts congestion, anticipates conflicts, and reroutes robots in real time, improving travel efficiency by roughly 10% and reducing energy consumption and operational costs.

Vulcan: Bringing Touch Into Automation

Vulcan addresses one of the biggest limitations of warehouse robotics: object manipulation. Equipped with force-feedback sensors, Vulcan detects slippage, measures grip pressure, and adjusts automatically. This enables it to handle nearly 75% of warehouse items, including irregular, soft, or deformable objects that previously required human dexterity.

Vulcan works in tandem with DeepFleet, receiving task assignments while providing human-like precision.

Proteus and Sequoia: Operating in Human Spaces

Proteus, Amazon’s first fully autonomous mobile robot, navigates open warehouse floors alongside human workers, interpreting movement patterns and avoiding disruption. When combined with Sequoia, an AI-driven inventory and storage system, the platform identifies, sorts, and stores products up to 75% faster than manual processes.

Overall, Amazon demonstrates how Physical AI can scale in complex, dynamic environments, combining automation, learning, and adaptability at unprecedented levels.Tesla and the Pursuit of General-Purpose Humanoids.

Tesla and the Pursuit of General-Purpose Humanoids

Tesla is taking a broader approach to robotics, aiming to develop general-purpose humanoid robots capable of operating across multiple industries. Under Elon Musk’s leadership, the company plans to evolve from an automotive manufacturer into a fully integrated robotics and AI leader, applying the same iterative philosophy behind its autonomous vehicles.

In early 2026, Tesla unveiled Optimus Gen 3, its first humanoid robot engineered for mass production. Unlike machines designed for narrowly defined tasks, Optimus Gen 3 serves as a scalable platform that combines perception, reasoning, and dexterous manipulation. Equipped with learning capabilities, it can observe humans, adapt to diverse environments, and perform tasks that traditionally required specialized machines or human labor.

Optimus Gen 3: A Humanoid Built for Scale

Optimus Gen 3 represents the tangible outcome of Tesla’s broader vision, a general-purpose robot capable of performing a wide range of tasks across industries. Tesla plans to produce up to one million units annually, with a projected long-term price between $20,000 and $30,000, bringing humanoid robots closer to consumer accessibility than traditional industrial machines.

Moreover, the robot features 22 degrees of freedom in its hands, powered by tendon-inspired actuation systems, allowing precise manipulation for tasks such as component assembly, warehouse operations, and fine motor activities. Its AI stack enables learning from video and human demonstrations, eliminating the need for task-specific programming. Tesla has already integrated Optimus into Fremont factory operations, with commercial deployment expected by 2027. By standardizing hardware, software, and training pipelines, Tesla ensures rapid iteration, lower production costs, and continuous improvement through real-world deployment.

China: A Rising Leader in Physical AI

While Amazon and Tesla dominate in logistics and general-purpose humanoids, China is emerging as a global powerhouse in Physical AI research and deployment. Chinese companies and research institutions are rapidly developing robots for manufacturing, healthcare, retail, and urban logistics, with real-world testing taking place in cities such as Shenzhen and Shanghai.

Notable examples include:

-

Ubtech Robotics’ Walker – a humanoid robot capable of complex mobility and human interaction tasks.

-

Siasun’s AMR fleet – autonomous mobile robots deployed in factories and warehouses for logistics and assembly.

-

JD.com delivery robots – autonomous units that deliver packages in urban environments, navigating streets and buildings efficiently.

-

CloudMinds’ service robots – deployed in hospitals and commercial spaces to assist with patient care, cleaning, and guidance.

In addition, the Chinese approach emphasizes scale, integration, and government-industry collaboration, enabling faster deployment and innovation. By combining advanced AI perception, real-time coordination, and adaptive robotics, China is positioning itself as a key driver of the next generation of Physical AI technologies, alongside Western leaders like Amazon and Tesla.

Physical AI Across Industries

Physical AI extends beyond warehouses and factories. Any industry involving repetitive tasks, precision work, or human interaction can benefit—from logistics and manufacturing to construction, retail, and elder care. By combining adaptability, tactile intelligence, and learning, robots perform complex tasks efficiently while supporting human workers.

Healthcare highlights the potential of Physical AI. Hospitals and elder care facilities face staff shortages, rising patient demand, and high-stakes environments. Lessons learned here guide applications across other industries, showing how adaptive robots enhance human work.

Physical AI in Healthcare: Why It Matters

Healthcare demonstrates the critical value of Physical AI. Robots help automate repetitive, time-consuming, or physically demanding tasks, allowing clinicians to focus on activities requiring human empathy, judgment, and decision-making. Hospitals and elder care facilities benefit from enhanced efficiency, safety, and improved patient outcomes.

Senior Wellness and the Aging Population

With the global population aging rapidly—one in six people projected to be over 60 by 2030—there is an urgent need for support systems that ensure safety, monitor health, and provide social engagement. Physical AI robots help seniors with daily activities, fall detection, medication reminders, and cognitive exercises, improving both physical and mental wellness.

Healthcare Robots in Action

-

Clinical Support: Robots like Grace and Moxi autonomously deliver medications, lab samples, and supplies, freeing staff for direct patient care.

-

Rehabilitation: Robots such as the GR-1 assist patients in standing, walking, and balance training, recording precise data for therapy monitoring.

-

Elderly Care: Robots provide companionship, cognitive exercises, and emergency alerts, helping reduce isolation and health risks.

Healthcare serves as a proving ground for Physical AI because it involves high-stakes, human-centric, and unpredictable environments, offering lessons applicable to other industries.

Broader Implications and Labor Transition

The adoption of Physical AI addresses global labor shortages by automating tasks that are dull, dirty, or dangerous. Human workers are redirected toward higher-skill roles such as robotics maintenance, AI operations, and system supervision. Amazon alone has upskilled over 700,000 employees to support its fleet of robots.

Physical AI does not replace work—it reshapes it. By fostering collaboration between humans and adaptive machines, it enhances productivity, safety, and quality of life across all industries—from logistics and manufacturing to healthcare and elder care.